About Me

Hi there! I'm a software developer and designer who loves solving problems. Whether the solution is a website, mobile app, or other custom software implementation, I enjoy building things that meet the needs of my clients. I've developed and designed on numerous frameworks and platforms, with most of my experience on web and mobile app platforms, and I love learning a new language or framework to solve a unique problem.

Work Experience

Senior Software Engineer

at Leiten

June 2020 - Present

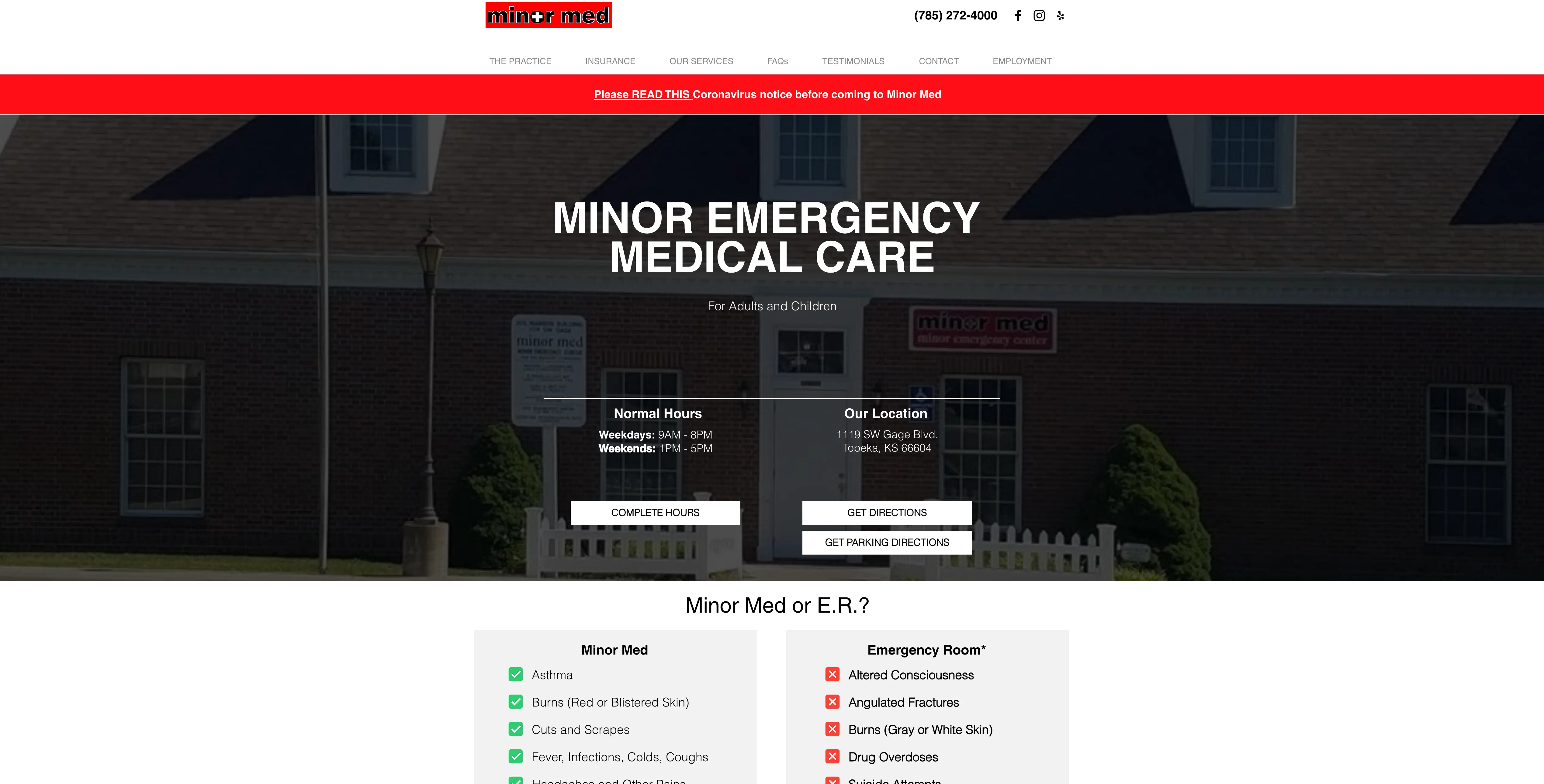

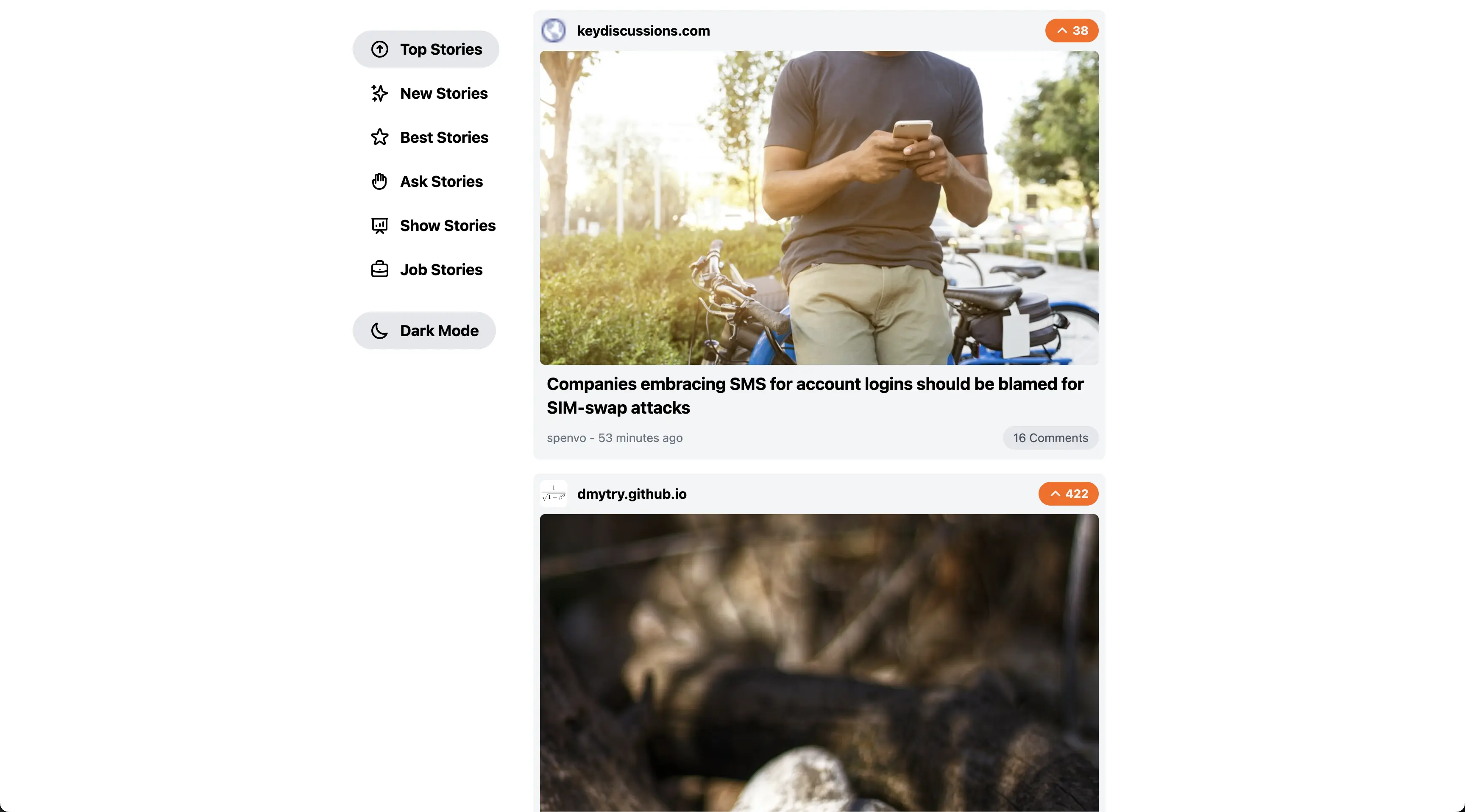

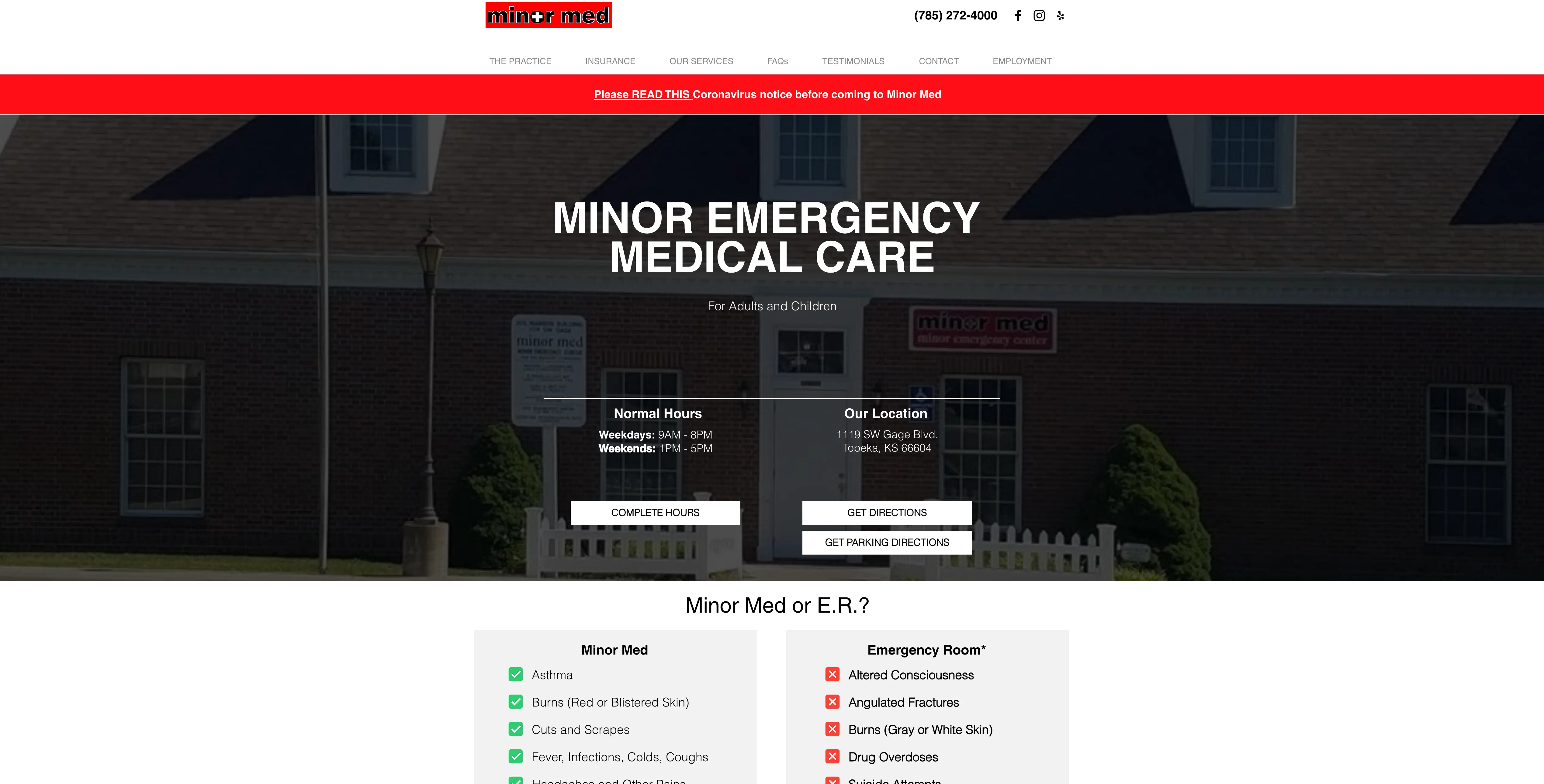

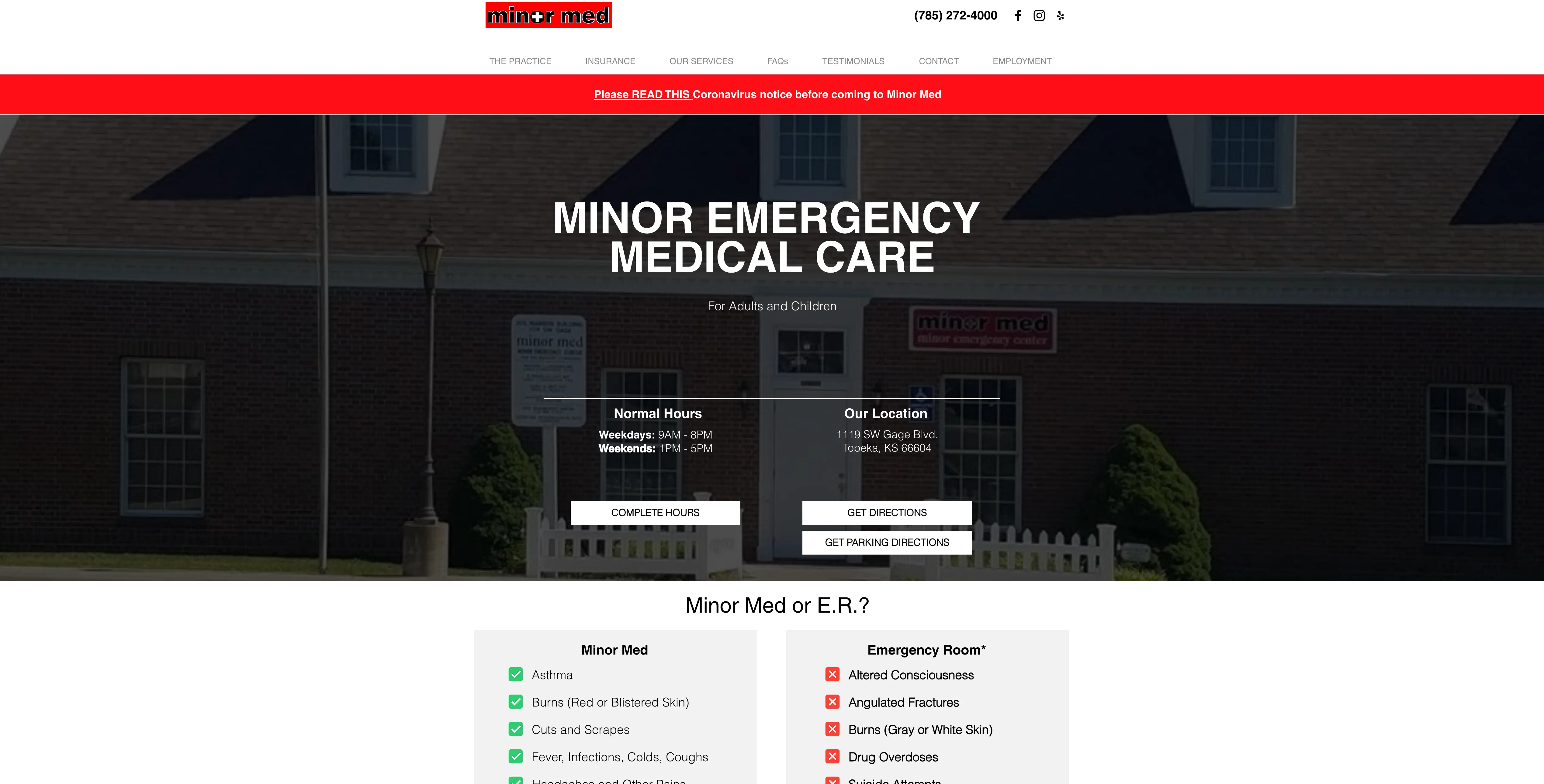

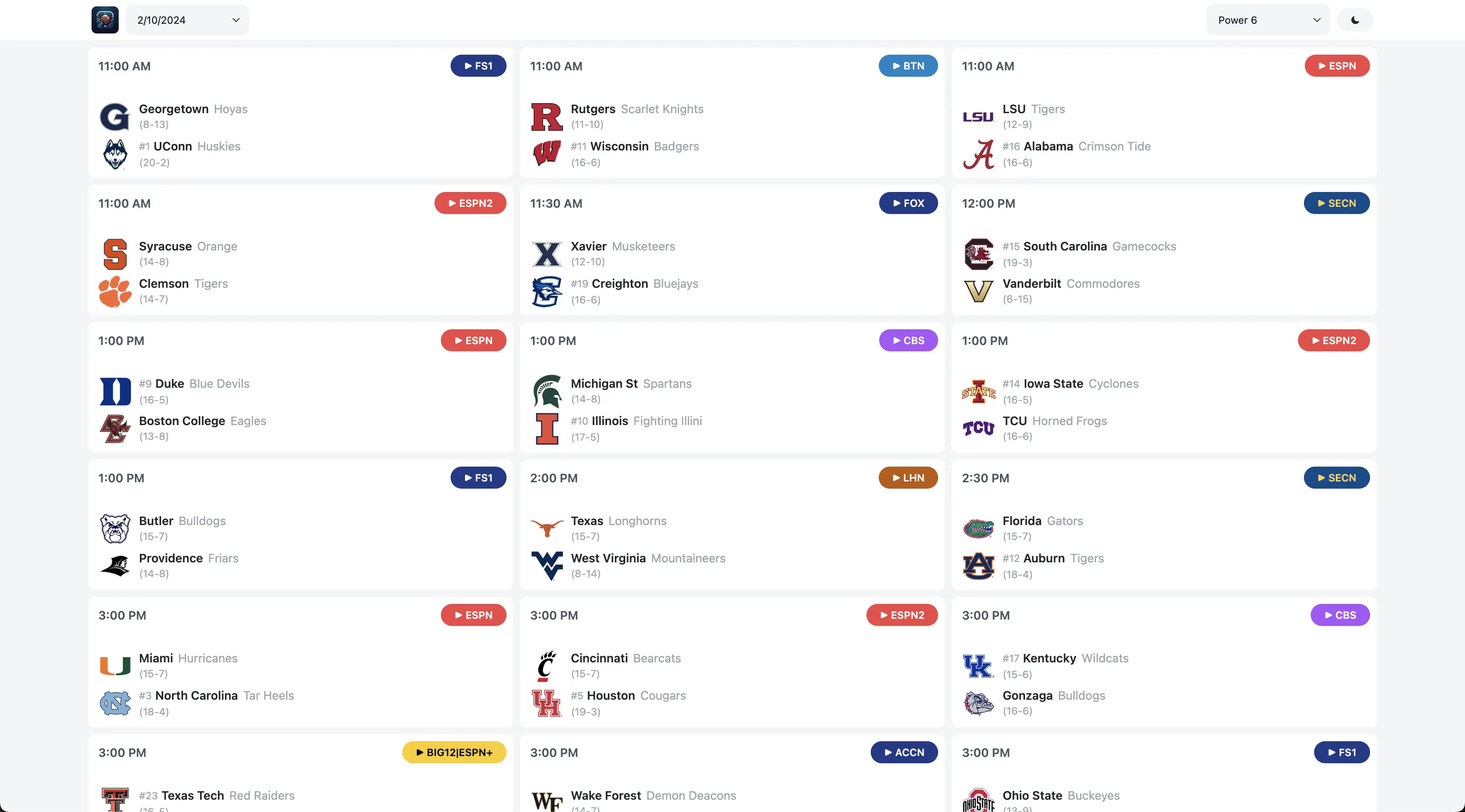

Freelance website and mobile app developer

August 2014 - Present

Worship Coordinator

at Mission Church

October 2022 - August 2024

Web Developer

at Agrinux Solutions

July 2019 - January 2020

IT Intern

at Vanderbilt's

May 2019 - July 2019

Undergraduate Research Assistant

at Washburn University

July 2018 - May 2019

Web Development Intern

at Imagemakers

July 2016 - January 2017